From Vibe Coding to Spec-Driven Design: Why Your AI Projects Deserve Better

28. March 2026

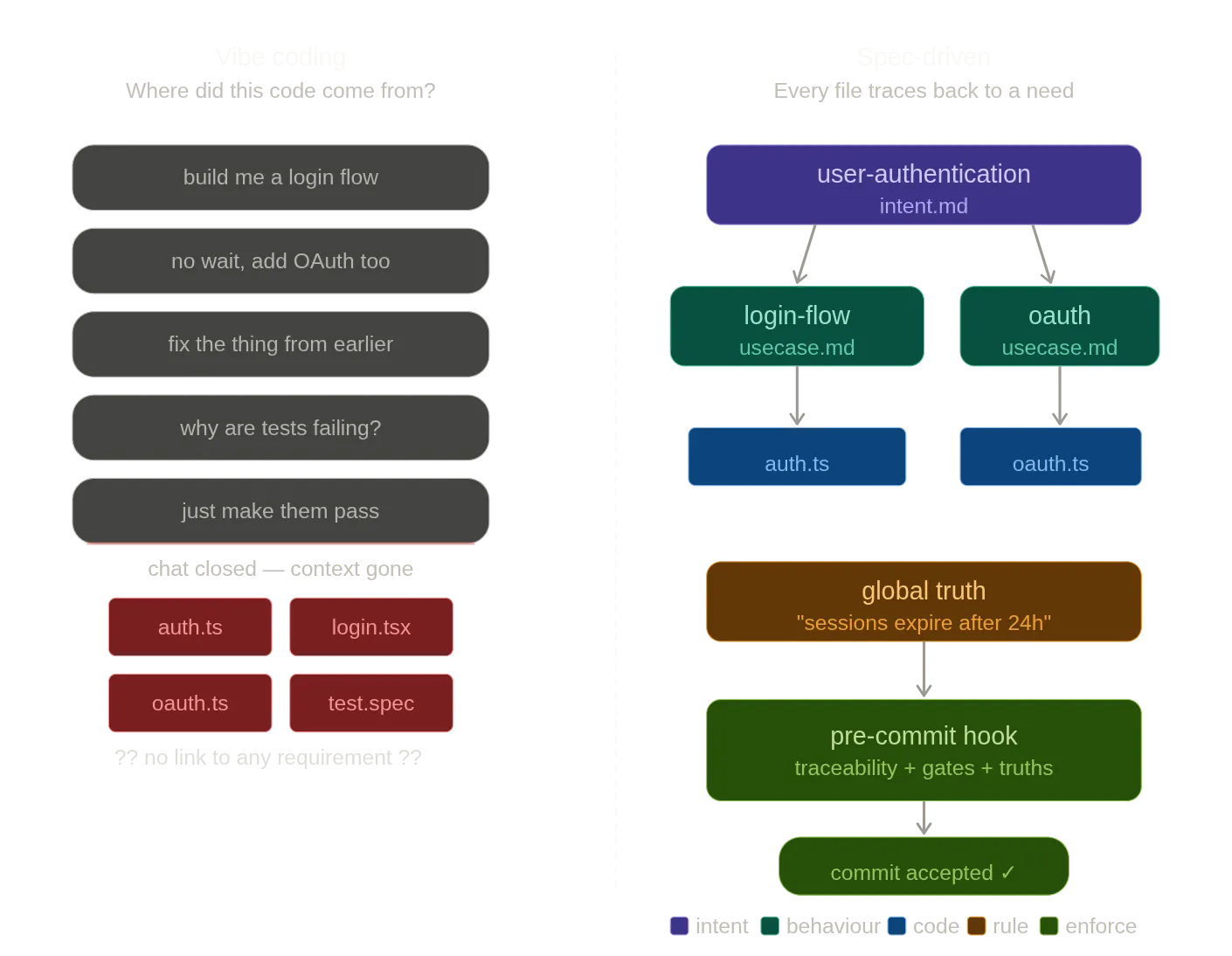

Vibe coding got us excited. Spec-driven design will get us to production.

I didn’t start with a methodology. I started with frustration.

I kept watching AI do the same things wrong. It would invent requirements nobody asked for, adding features that sounded plausible but had nothing to do with what I actually needed. It would change code that was already working, “improving” something that didn’t need improving and breaking the thing that did. And the one that really got to me: when tests failed, it would rewrite the tests to pass instead of fixing the code. The AI was essentially grading its own homework and giving itself full marks.

None of these are rare edge cases. Anyone who’s spent real time building with AI has seen all three. And they’re not bugs in the model. They’re what happens when there’s no source of truth to hold the work accountable.

Starting from the limitations

Andrej Karpathy coined “vibe coding” in early 2025: casually prompting AI to generate code with minimal structure. It’s a great way to explore and prototype. But the more I used it for real projects, the more I noticed the same patterns breaking in the same ways.

AI invents things that aren’t there. Not just hallucinated APIs, but hallucinated requirements. You ask for a login flow and it adds a password strength meter, an account recovery system, and email verification, none of which you specified. Each addition sounds reasonable, which makes it harder to catch. The AI is filling gaps in your brief with its own assumptions, and it doesn’t tell you which parts are yours and which parts are its.

This got me thinking: what if you described the same thing in two different ways? A spec that says what the system should do, and code that implements it. Two representations of the same intent. When they agree, you have confidence. When they disagree, you have a signal. Same principle as double-entry bookkeeping. Not because accountants don’t trust themselves, but because two views of the same truth make errors visible.

Working code doesn’t stay working. You build a feature, the AI nails it, you move on. Next session you ask for something adjacent, and the AI, which has no memory of the original requirement, quietly rewrites what was already done. Not maliciously. It just doesn’t know that code was finished. Everything looks like raw material to be reshaped.

The obvious response: keep requirements as a fixed base, separate from the code but linked to it. Not documentation in a wiki that goes stale, but files in the repo, versioned alongside the code, with explicit links you can trace. When something is marked as done, that status is anchored.

Tests get rewritten to pass, not to verify. This is the one that convinced me the problem was structural. When the AI writes both the code and the tests, and the tests fail, it has two options: fix the code or fix the tests. Without a spec defining expected behaviour, both options look equally valid. So it picks whichever is easier, which is usually rewriting the assertion. The tests pass. The code is still wrong.

You need an independent source of truth that the AI can’t edit its way out of. Something that defines expected behaviour and doesn’t bend when the implementation struggles to meet it.

Context evaporates between sessions. Real projects span weeks. You close the tab, open a new session, and the AI starts fresh. You re-explain constraints it already knew. Each time it interprets them slightly differently.

This pointed toward persistent, structured artifacts that carry intent across sessions. Not prompt history, but specs that any AI can read at the start of a conversation and immediately understand the project’s goals, boundaries, and rules.

Specs as context engineering

Each of these failure modes pointed in the same direction: write the what and why before you let AI generate the how. Keep it structured. Keep it persistent. Keep it linked to the code.

That’s spec-driven development. The spec can be a PRD, user stories, architecture decisions, API contracts, or even a markdown file describing what “done” looks like. The format matters less than the discipline of capturing intent in something that outlives the conversation.

The reframe that made it click for me: specs aren’t bureaucracy. They’re context engineering. We already know AI performs better with rich context. A spec is just the most structured, reusable form of that context. You’re not adding overhead. You’re improving the input to improve the output.

What this looks like in practice

Most descriptions of SDD list phases (Define, Architect, Spec, Generate, Validate) which is accurate but unhelpful, like “buy low, sell high” is accurate investment advice.

What actually changes is your relationship with the AI. You stop having open-ended conversations and start giving focused briefs. Instead of “build me an auth system” followed by fifteen rounds of correction, you hand the agent a document covering what it needs to do, what constraints it has, and what “done” looks like. The output is consistently better on the first pass.

The spec also changes validation. With acceptance criteria written before the code exists, you’re not squinting at output wondering “is this roughly right?” You have concrete things to check. Deviations get caught early.

It costs something upfront, maybe an hour for a substantial feature. But that hour replaces the three or four you’d spend in the correction loop, and produces an artifact that survives the session.

(AI is also good at helping you write specs. Describe what you want roughly, let it ask probing questions, draft requirements. You own the final spec, but the drafting can be collaborative.)

The tooling landscape

A growing number of tools are tackling spec-driven development from different angles. I’ll write about some of these in more detail in future posts, but here’s a quick map of the space:

- Taproot — my project. Full-process SDD: requirements as repo files, dual representation (spec + code), traceability, and commit-time enforcement. Agent-agnostic, works with existing codebases.

- BMAD — multi-agent planning framework. Specialised AI personas (PM, Architect, Scrum Master, Dev) that produce structured handoffs from brief to implementation.

- GitHub Spec Kit — open-source CLI with a “constitution” concept for project-wide governance. Agent-agnostic, four-phase workflow.

- Amazon Kiro — VS Code-based IDE with native spec mode. Spec-driven workflows built into the editor.

- Tessl — the most radical approach. The spec is the maintained artifact; code is fully generated.

Many developers are already doing lightweight SDD without calling it that. If you maintain a CLAUDE.md file that gives your agent persistent context, you’re practising the core principle. The tools add structure, and in some cases, enforcement.

This isn’t waterfall

“Sounds like writing requirements before code. Didn’t we spend twenty years getting away from that?”

Fair. But waterfall assumed you could get requirements right upfront and execute linearly. SDD assumes you can’t, but that living documentation makes iteration faster and safer. Specs evolve. The difference is that changes are tracked and propagated, not patched into a chat and lost when you close the tab.

If anything, SDD is closer to the original spirit of agile. User stories were always a contract between stakeholders and developers. SDD extends that contract to include AI.

Try it

You don’t need a framework to test this. Pick a feature with enough moving parts that you’d normally need several rounds of prompting. Before opening your AI assistant, spend thirty minutes writing a markdown doc: what you’re building, why, what constraints exist, what “done” looks like.

Hand that to your agent alongside the implementation request. See what happens.

If that resonates, pick a tool from the list above, or just keep using markdown and your existing agent. The value is in the thinking you do while writing the spec, not in any particular framework.

The best AI engineers of 2026 won’t be the best prompters. They’ll be the ones who figured out that a good spec is the highest-leverage input you can give.